Build Privacy-Aware AI Agents With Google ADK

Enterprise AI agents are becoming genuinely useful — they can query CRM records, search call transcripts, invoke external APIs, and reason across multiple data sources. But the more capable they get, the harder it becomes to ensure sensitive data doesn't leak through the wrong channel.

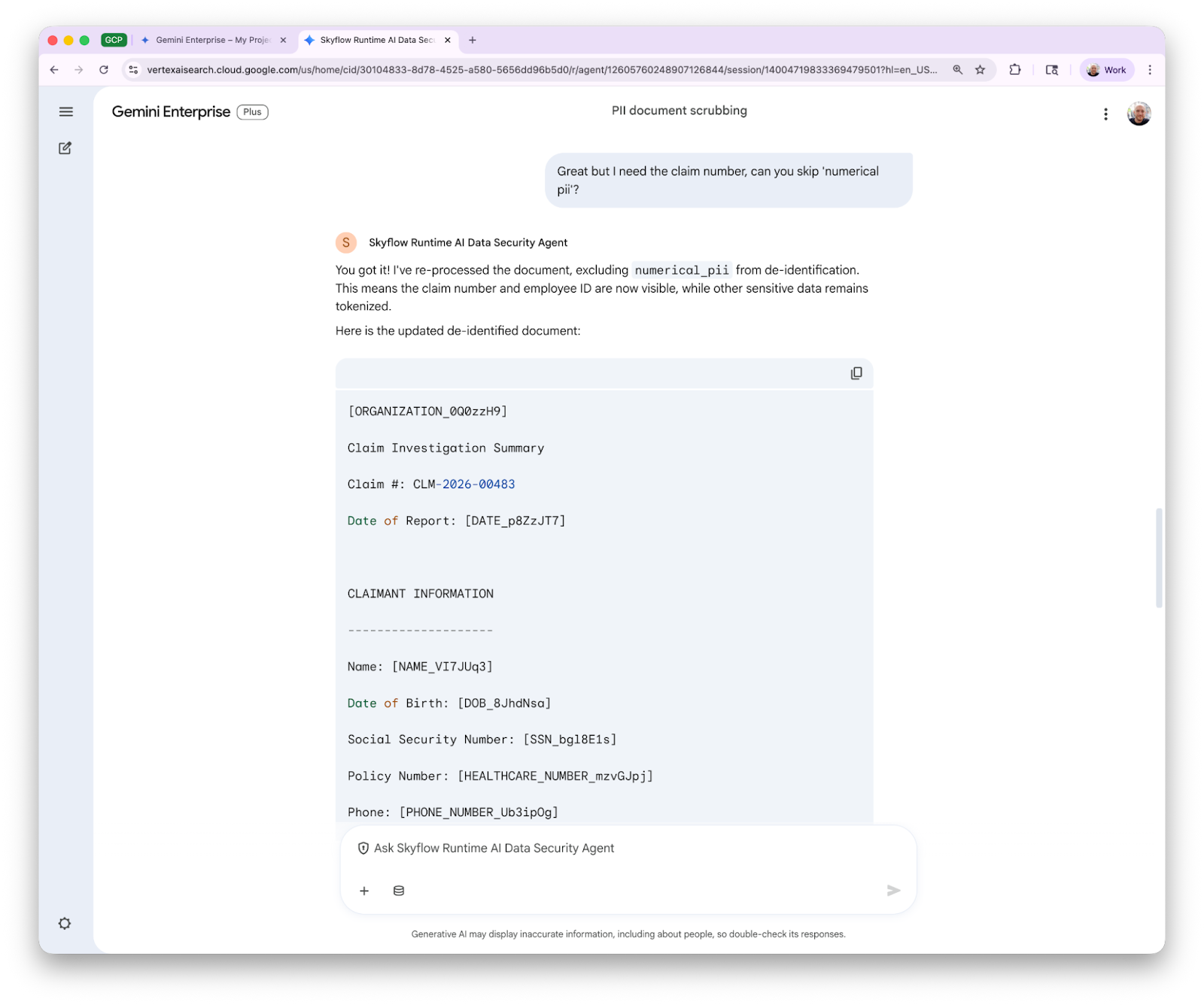

We just launched the Skyflow Runtime Data Security Agent on Gemini Enterprise Agent Marketplace. It's a privacy-focused agent built on Google's Agent Development Kit (ADK) that can de-identify and re-identify sensitive data, and provide actionable guidance on GenAI security risks grounded in the OWASP GenAI Data Security Risks & Mitigations 2026 report. You can install it in Gemini Enterprise today, consume it as a subagent in your own ADK agents, or call it directly over A2A from Vertex Agents, Antigravity, or any A2A-compatible client.

This is how we built it.

The Goal

The point of this agent is to make Skyflow's data privacy operations — de-identification, re-identification, and security guidance — available as a composable service that other agents and clients can reach through a standard protocol. Not a library you import, not an SDK you wire up — a running agent with a discoverable API that any A2A-capable client can authenticate with and call.

That means building something that works as a standalone agent in Gemini Enterprise, as a subagent delegated to by other ADK or Vertex agents, and as a JSON-RPC endpoint hit by custom tooling. The A2A protocol and Google's ADK make all three possible from the same codebase.

The Stack

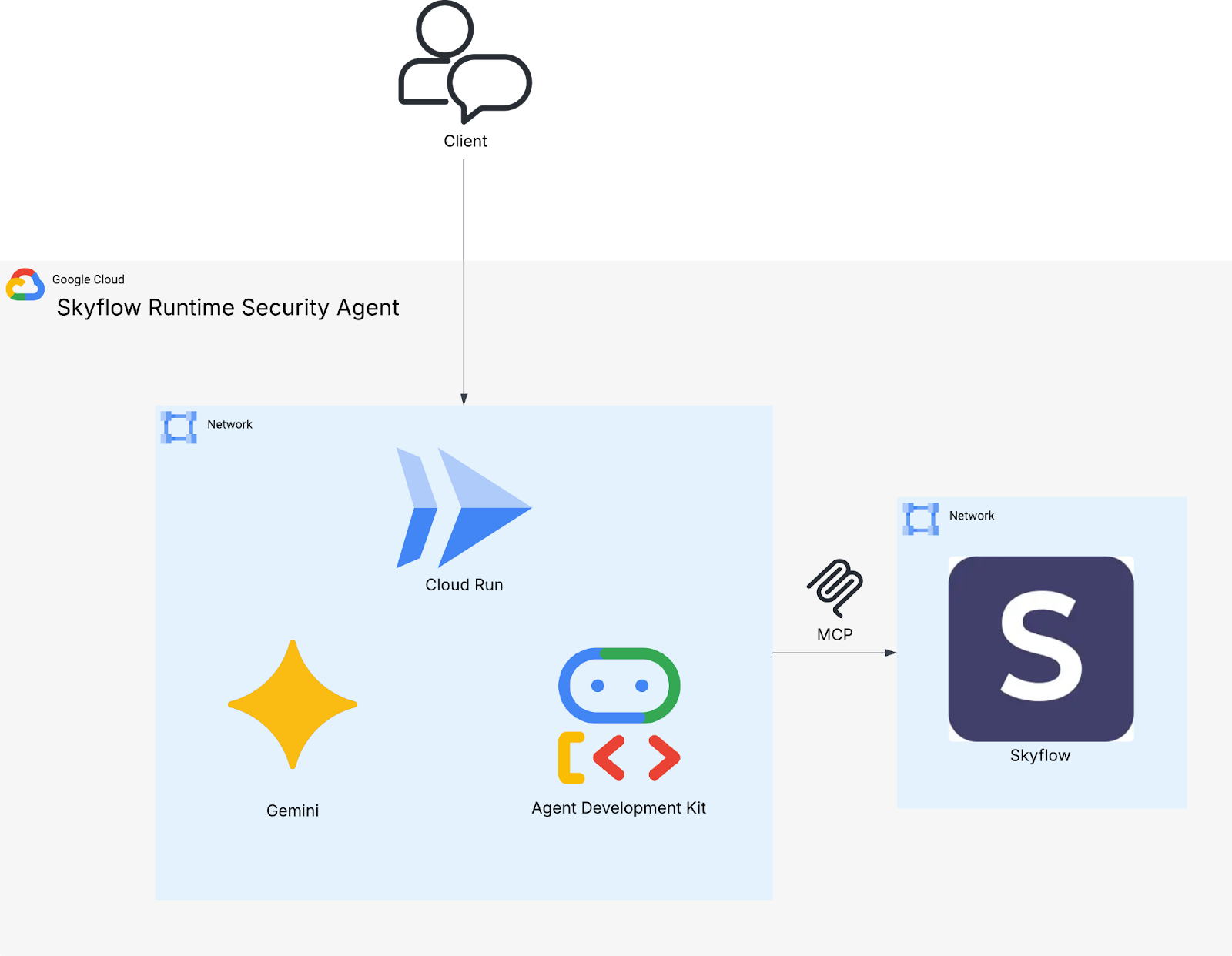

- Google ADK (google-adk[a2a]) — the agent framework, with built-in support for MCP tools, A2A, and file-based skills

- Gemini on Vertex AI — private model hosting; the model runs in your GCP project, not through the public Gemini API

- Cloud Run — containerized deployment with GCP Secret Manager for credential storage

- A2A protocol — standardized JSON-RPC interface for agent-to-agent and client-to-agent interactions

- Skyflow Runtime MCP Server (preview) — standards-compliant MCP interface for Skyflow's de-identification and re-identification APIs

- Gemini Enterprise — where end users actually talk to the agent, with OAuth-based authentication and one-click install from Agent Marketplace

The Agent

The agent itself is a standard ADK Agent backed by Gemini:

from google.adk.agents.llm_agent import Agent

root_agent = Agent(

model="gemini-2.5-flash",

name="skyflow_privacy_agent",

description=(

"Handles data privacy operations using Skyflow: "

"tokenizing (dehydrating) and detokenizing (rehydrating) sensitive data."

),

instruction=_instruction,

tools=[skyflow_toolset, skill_toolset],

)

With GOOGLE_GENAI_USE_VERTEXAI=True, this routes through Vertex AI — so when you're building agents that handle sensitive data, the model inference stays inside your GCP project boundary.

The agent has two types of capabilities:

- MCP tools for data privacy operations (via Skyflow)

- Skills for security domain knowledge via the ADK's experimental skills system. More on both of those below.

A2A: The Protocol That Ties It Together

The agent publishes an Agent Card at .well-known/agent.json that describes what it can do and how to authenticate:

{

"name": "Skyflow Runtime Data Security Agent",

"description": "An agent specialized in data privacy operations...",

"skills": [

{

"id": "data_deidentification",

"name": "Data De-identification",

"description": "Tokenize and de-identify sensitive data in text...",

"tags": ["privacy", "pii", "tokenization", "skyflow"]

},

{

"id": "data_reidentification",

"name": "Data Re-identification",

"description": "Detokenize previously de-identified data...",

"tags": ["privacy", "detokenization", "skyflow"]

}

],

"version": "1.1.0"

}

A2A gets described as "agent-to-agent," but it's really just a standardized API for interacting with agents. Gemini Enterprise uses it. Other ADK agents use it via RemoteA2aAgent. And any client that speaks JSON-RPC can call the agent directly:

curl -X POST "$AGENT_BASE_URL/a2a/skyflow_privacy_agent/" \

-H "Content-Type: application/json" \

-d '{

"jsonrpc": "2.0",

"method": "message/send",

"params": {

"message": {

"role": "user",

"parts": [{"kind": "text", "text": "De-identify this: My SSN is 123-45-6789"}],

"messageId": "test-1"

}

},

"id": "1"

}'

This is what makes the agent composable. Another ADK agent can wire it up as a subagent in a few lines and delegate privacy operations to it:

from google.adk.agents.remote_a2a_agent import RemoteA2aAgent

privacy_agent = RemoteA2aAgent(

name="privacy_agent",

description="Handles data privacy operations using Skyflow.",

agent_card="https://your-cloud-run-url/.well-known/agent.json",

)

root_agent = Agent(

model="gemini-2.5-flash",

name="root_agent",

instruction="You can protect sensitive data using the privacy_agent.",

sub_agents=[privacy_agent],

)

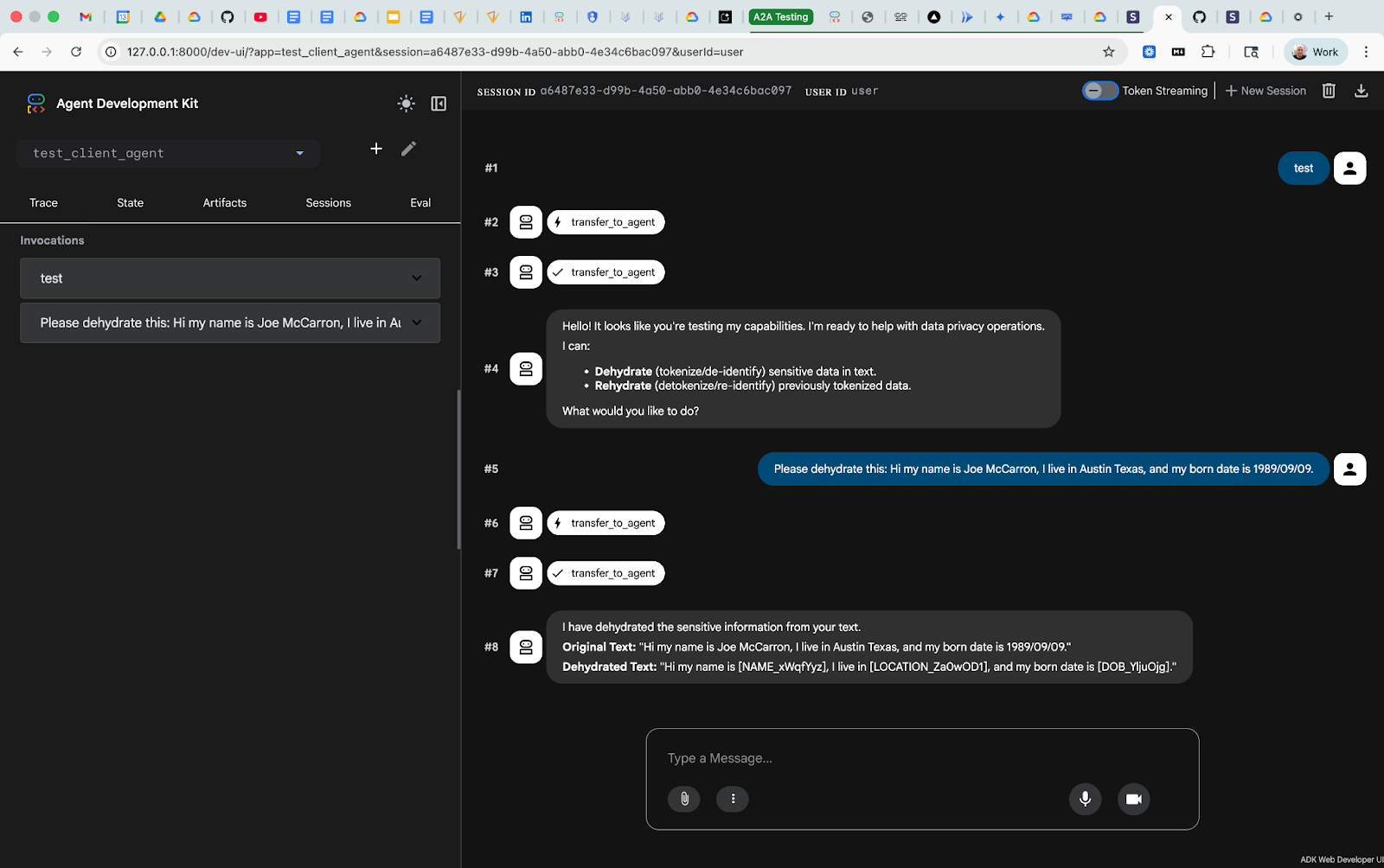

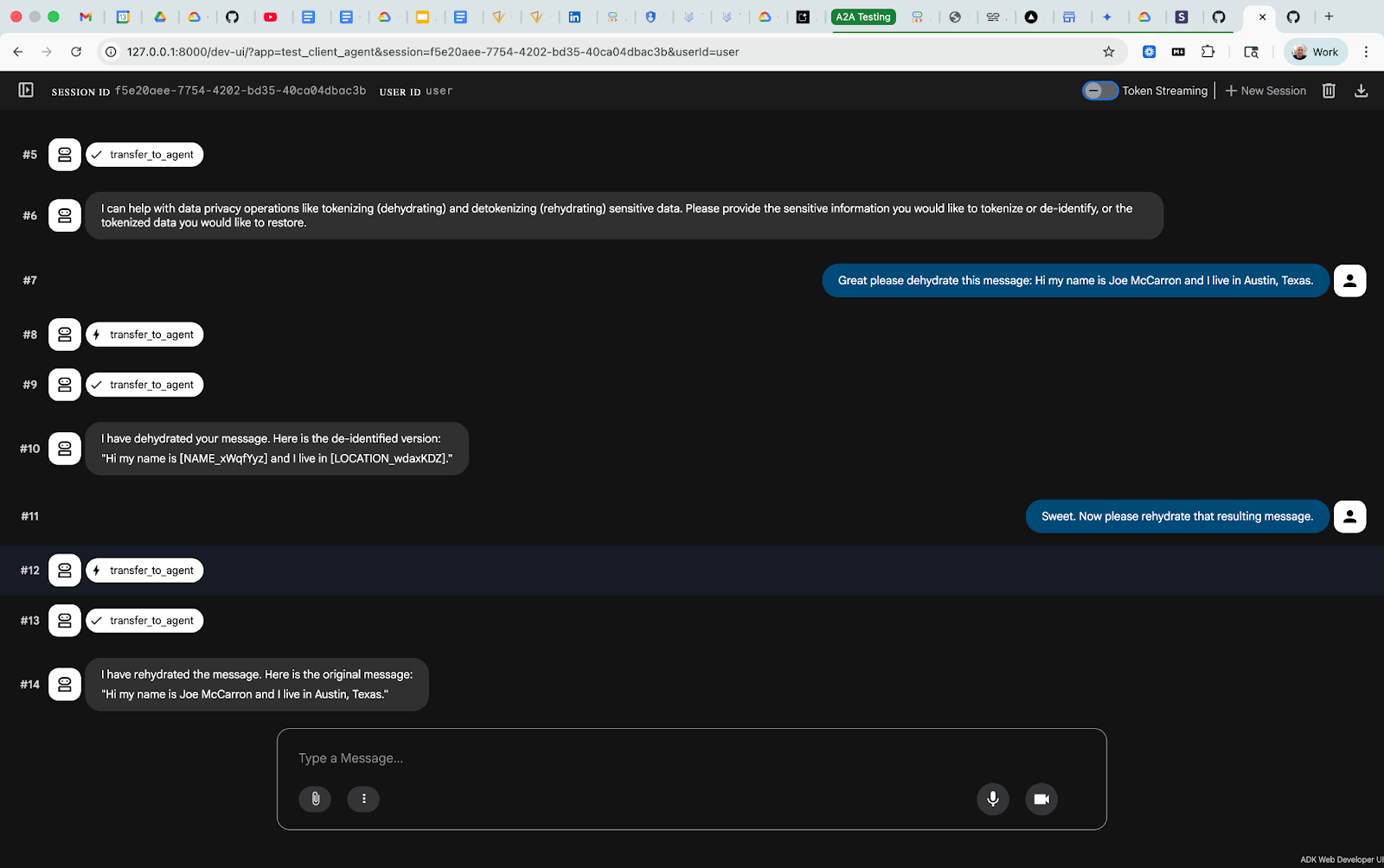

The ADK itself ships with a web client you can run locally to test your agent, and it supports multiple connection modalities: direct to the agent, as a subagent, and as part of a larger multi-agent system. The web UI gets your agent up and running for testing in seconds, and provides extensive developer-facing information: traces, state, artifacts, session metadata, multiple session management, and even an evals integration.

Skills: Enhanced Agent Capabilities Through Simple Markdown Files

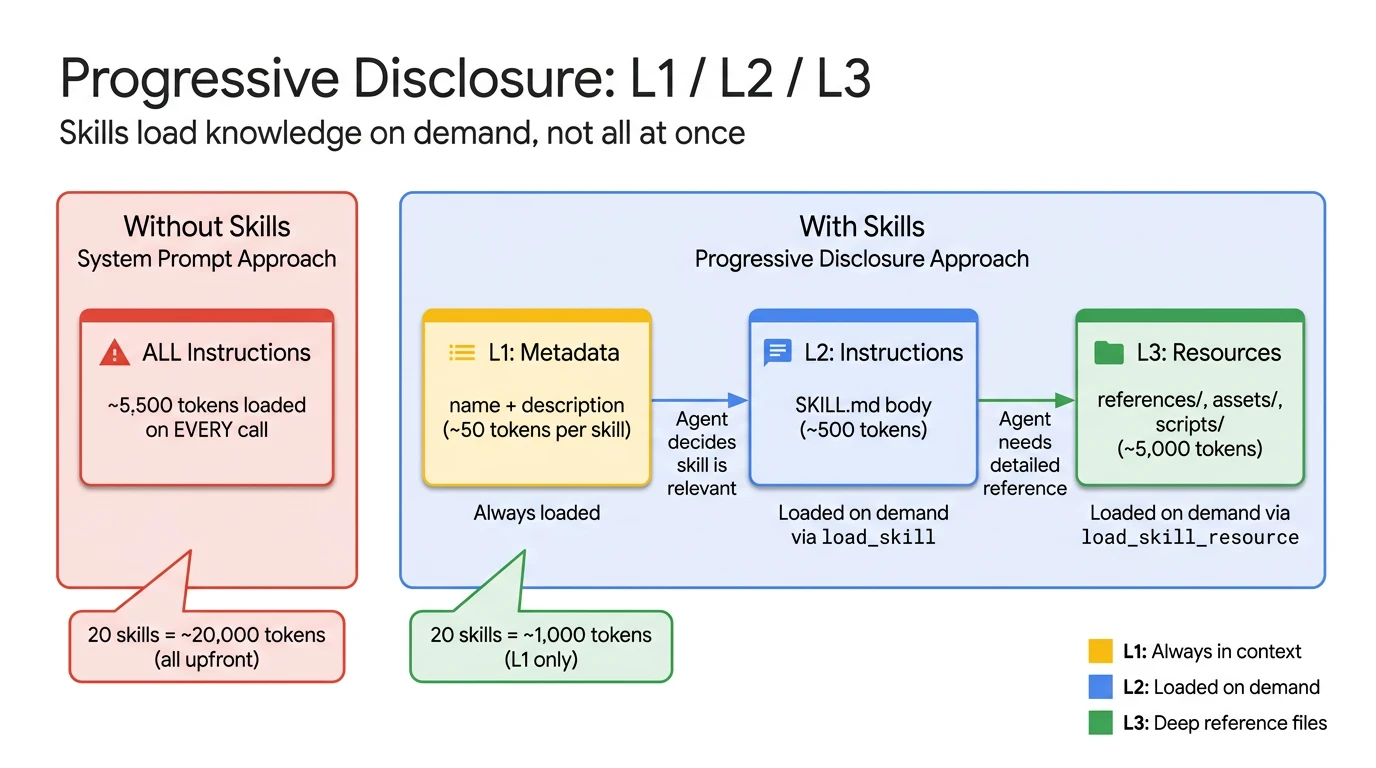

One of the ADK's more interesting (and still experimental) features is file-based skills. A skill is a directory with a SKILL.md file — YAML frontmatter for metadata, markdown for instructions — plus optional reference materials. The agent discovers and loads them at startup.

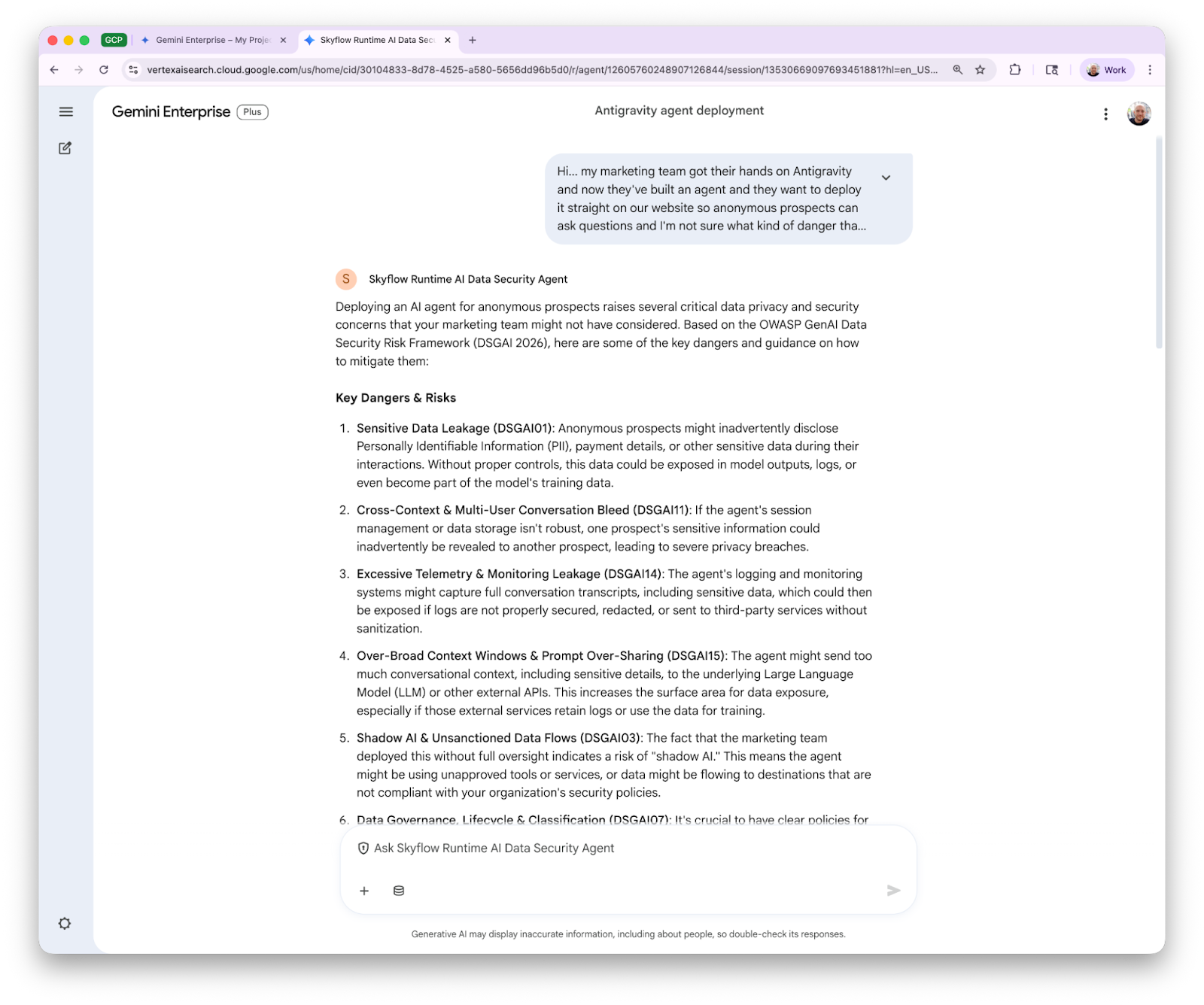

We built a skill that covers all 21 risks from the OWASP GenAI Data Security Risks and Mitigations 2026 report. Each risk gets its own deep-dive reference file with attack vectors, tiered mitigations, detection signals, and regulatory mappings:

skills/

└── owasp-ai-risks-and-mitigations-2026/

├── SKILL.md # Skill definition + quick-reference

└── references/ # Per-risk deep-dive files

├── dsgai01.md # Sensitive Data Leakage

├── dsgai02.md # Agentic Identity & Credential Exposure

├── ...

└── dsgai21.md # Disinformation & Integrity Attacks

This means the agent can assess risks, recommend mitigations, and cite specific OWASP risk IDs — grounded in reference material rather than whatever the model remembers from training, or - even worse - pulls from the internet.

The loading of skills is dead-simple with the Google ADK. Add your skill.md files to the project directory. Load them by path. Get a toolset.

from google.adk.skills import load_skill_from_dir

from google.adk.tools.skill_toolset import SkillToolset

_SKILLS_DIR = pathlib.Path(__file__).parent / "skills"

_skills = []

for skill_dir in sorted(_SKILLS_DIR.iterdir()):

if skill_dir.is_dir() and (skill_dir / "SKILL.md").exists():

_skills.append(load_skill_from_dir(skill_dir))

skill_toolset = SkillToolset(skills=_skills)

Heads-up: If you're deploying with Cloud Build, make sure your .gcloudignore and .dockerignore aren't filtering out these .md files.

Tools: Data De-Identification and Re-Identification, via MCP

The agent's de-identification and re-identification capabilities are powered by Skyflow’s Detect API for finding and tokenizing sensitive strings hidden in unstructured data. The agent connects to Skyflow's Runtime MCP Server (in preview), configured through the ADK's native McpToolset:

from google.adk.tools.mcp_tool import McpToolset, StreamableHTTPConnectionParams

skyflow_toolset = McpToolset(

connection_params=StreamableHTTPConnectionParams(

url=SKYFLOW_MCP_SERVER_URL,

headers={"Authorization": f"Bearer {oauth_bearer_token}"},

),

tool_filter=["de-identify", "re-identify"],

)

Note: The tool_filter keeps the agent's tool surface area tight — the MCP server may expose additional tools, but we only surface the two the agent needs.

De-identify takes unstructured text, detects sensitive data (PII, credit cards, SSNs, etc.), and replaces each value with a Skyflow vault token. The tokens are consistent - the same value produces the same token - which preserves referential integrity across systems.

Re-identify resolves tokens back to their original values. Skyflow's polymorphic privacy tokens can be resolved in context even within loosely-structured text, not just structured database columns. That's what makes them work for agent conversations as well as traditional data pipelines.

The MCP server wraps these APIs in a standards-compliant easy-to-integrate interface. No custom SDK, just McpToolset pointed at a URL.

Authentication and Access Control

Gemini Enterprise makes authentication easy by providing direct OAuth 2.0 support. When a user messages the A2A agent in Gemini Enterprise they're automatically prompted to authenticate with Skyflow in their browser before their message is delivered.

That temporary bearer token is what authorizes operations against the Skyflow MCP Server. It's not a static API key stored in a secret — it's a dynamically issued, JWT-based, user-scoped credential governed by Skyflow's policy engine.

What the bearer token can actually do is determined by policies configured in Skyflow, not hardcoded in the agent.

To enable seamless authentication flows the agent card - the standard interface through which A2A agents are made discoverable - advertises the OAuth endpoints so A2A clients can discover exactly how to authenticate before they send a message:

{

"securitySchemes": {

"oauth2": {

"type": "oauth2",

"flows": {

"authorizationCode": {

"authorizationUrl": "https://your-idp.com/authorize",

"tokenUrl": "https://your-idp.com/token",

"scopes": {

"agent:privacy": "Access data privacy operations."

}

}

}

}

}

}

On the agent side, authentication middleware validates every inbound A2A request. It introspects the auth token, checks for the agent:privacy scope, and rejects anything that doesn't pass so that only credentials explicitly authorized to access the agent are allowed. The agent card discovery endpoint stays public so clients can find the agent and learn how to authenticate. This will probably be familiar if you’ve built remote MCP servers before.

From the user's perspective: click "Allow" once, then talk to the agent. From the security perspective: every request is authenticated, scoped, policy-governed, and auditable.

What's Next?

The Runtime AI Security Agent is live on Gemini Enterprise Agent Marketplace now. Skyflow customers can install it in Gemini Enterprise and try it out today.

A2UI experience. The agent already has powerful capabilities enabled through its MCP tools which can not only de-identify context but provide rich additional context on the types of sensitive data detected as well as precise confidence scores. We’re already working on enhancements which allow the agent to render rich user experiences with detailed data and analysis powered by Skyflow’s detection engines and enabled by Google’s A2UI.

Skyflow Runtime MCP Server general availability. The MCP server powering the agent is coming as a standalone product (currently in preview). Because it's standards-compliant MCP, it works anywhere you can point an MCP client — Claude Desktop, LangChain, your own orchestration layer, or any of the growing number of platforms adopting the protocol. Build once, connect everywhere. Get in touch to join our preview customers!

More security and privacy skills. The OWASP GenAI Risks and Mitigations skill is already published and available as a standalone skill. You can try it out in any Skills-compatible client or drop it into your own ADK agents. We’re working on more open source skills grounded in real industry intelligence. Thanks to OWASP for consistently publishing quality resources for practitioners and making them available to everyone.

Fully Sanitized Agent. The Runtime Data Security Agent de-identifies data on demand — you ask it to tokenize something, and it does. But what if the model itself never saw sensitive data at all? We're testing a new agent that uses the ADK's before_agent and after_agent callbacks to de-identify all input before it reaches the model and re-identify output afterward. The model reasons entirely over contextual privacy tokens. The ADK's callback system - and A2A integration in Gemini Enterprise - is what makes this possible: before_model, after_model, before_tool, after_tool all give you hooks at every stage of the agent lifecycle, which is exactly what you want for privacy enforcement. See the ADK docs for more on the available callbacks. Get in touch if you want to participate in the preview!

For the business value overview of this agent launch, including the loyalty escalation use case, read the companion blog:

Build AI Agents on Gemini Enterprise Agent Platform Without Exposing Sensitive Data

Thank you!

Data privacy and security is more important than ever and we hope this helps provide an example of what’s possible when you prioritize and design for privacy with Skyflow and Google Cloud. If you care about this we care about you. Want more? Connect on LinkedIn, check out the skills repo, find the Skyflow team at Google Next, and contact Skyflow to get access to the preview.